It is also called dependent variable, and is represented on the \(y\)-axis one of the variable is considered the response or the variable to be explained.Simple linear regression is an asymmetric procedure in which: I will then conclude the article by presenting more advanced topics directly linked to linear regression. I will also show, in the context of multiple linear regression, how to interpret the output and discuss about its conditions of application. So after a reminder about the principle and the interpretations that can be drawn from a simple linear regression, I will illustrate how to perform multiple linear regression in R. However, I cannot afford to write about multiple linear regression without first presenting simple linear regression. Multiple linear regression being such a powerful statistical tool, I would like to present it so that everyone understands it, and perhaps even use it when deemed necessary. With data collection becoming easier, more variables can be included and taken into account when analyzing data.Multiple linear regression allows to evaluate the relationship between two variables, while controlling for the effect (i.e., removing the effect) of other variables.In the real world, multiple linear regression is used more frequently than simple linear regression. Multiple linear regression is a generalization of simple linear regression, in the sense that this approach makes it possible to evaluate the linear relationships between a response variable (quantitative) and several explanatory variables (quantitative or qualitative).More precisely, it enables the relationship to be quantified and its significance to be evaluated. Simple linear regression is a statistical approach that allows to assess the linear relationship between two quantitative variables.There are two types of linear regression: 1 The most common statistical tool to describe and evaluate the link between variables is linear regression. The last branch of statistics is about modeling the relationship between two or more variables. Inferential statistics (with the popular hypothesis tests and confidence intervals) is another branch of statistics that allows to make inferences, that is, to draw conclusions about a population based on a sample. Hypothesis testing can be done using our Hypothesis Testing Calculator.Remember that descriptive statistics is a branch of statistics that allows to describe your data at hand. The two tests for signficance, t test and F test, are examples of hypothesis tests. One of the most important parts of regression is testing for significance. This is known as multiple regression, which can be solved using our Multiple Regression Calculator. However, we may want to include more than one independent vartiable to improve the predictive power of our regression. In a simple linear regression, there is only one independent variable (x). Confidence intervals will be narrower than prediction intervals. A prediction interval gives a range for the predicted value of y. The differennce between them is that a confidence interval gives a range for the expected value of y. In both cases, the intervals will be narrowest near the mean of x and get wider the further they move from the mean. t TestĬonfidence intervals and predictions intervals can be constructed around the estimated regression line. The only difference will be the test statistic and the probability distribution used. In simple linear regression, the F test amounts to the same hypothesis test as the t test. The test statistic is then used to conduct the hypothesis, using a t distribution with n-2 degrees of freedom. So, given the value of any two sum of squares, the third one can be easily found. The relationship between them is given by SST = SSR + SSE. Before we can find the r 2, we must find the values of the three sum of squares: Sum of Squares Total (SST), Sum of Squares Regression (SSR) and Sum of Squares Error (SSE). The coefficient of determination, denoted r 2, provides a measure of goodness of fit for the estimated regression equation.

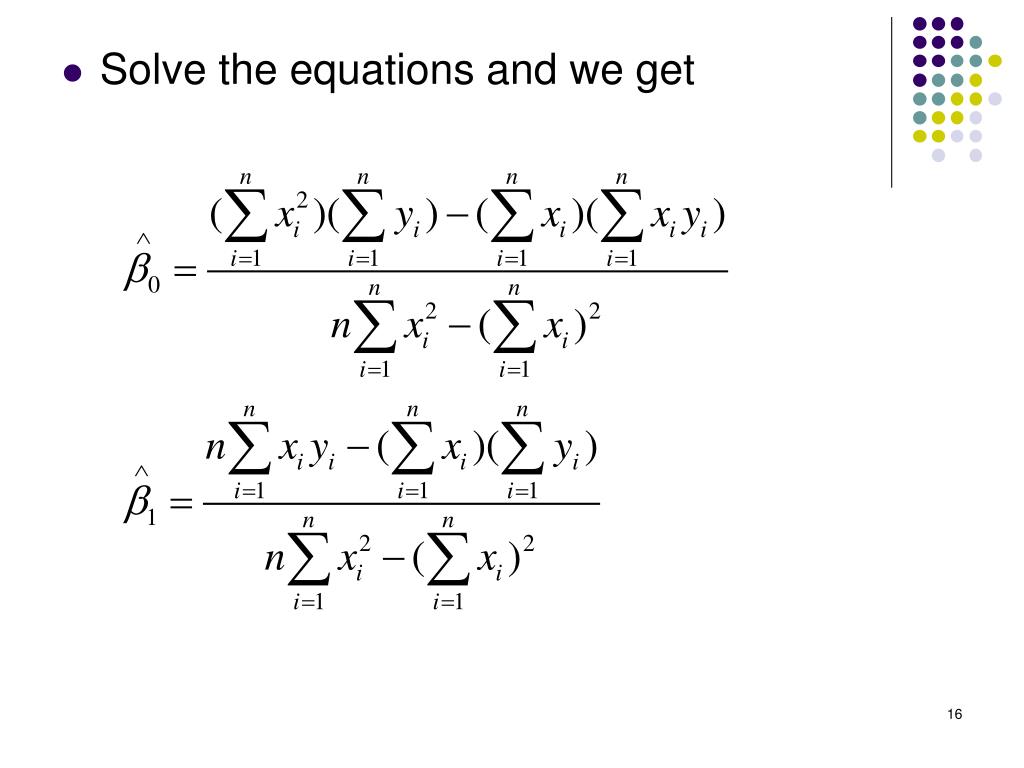

The graph of the estimated regression equation is known as the estimated regression line.Īfter the estimated regression equation, the second most important aspect of simple linear regression is the coefficient of determination. The formulas for the slope and intercept are derived from the least squares method: min Σ(y - ŷ) 2. There are two things we need to get the estimated regression equation: the slope (b 1) and the intercept (b 0). Furthermore, it can be used to predict the value of y for a given value of x. It provides a mathematical relationship between the dependent variable (y) and the independent variable (x). In simple linear regression, the starting point is the estimated regression equation: ŷ = b 0 + b 1x.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed